Understanding how a time stamp indicates the date and time in digital systems involves tracking elapsed intervals from a fixed reference point. Most systems use the Unix Epoch (seconds since Jan 1, 1970) or formatted strings like ISO 8601 to ensure precise synchronization across global networks, blockchain ledgers, and modern 64-bit computing environments as of 2026.

The Core Logic: How Digital Systems Define Time

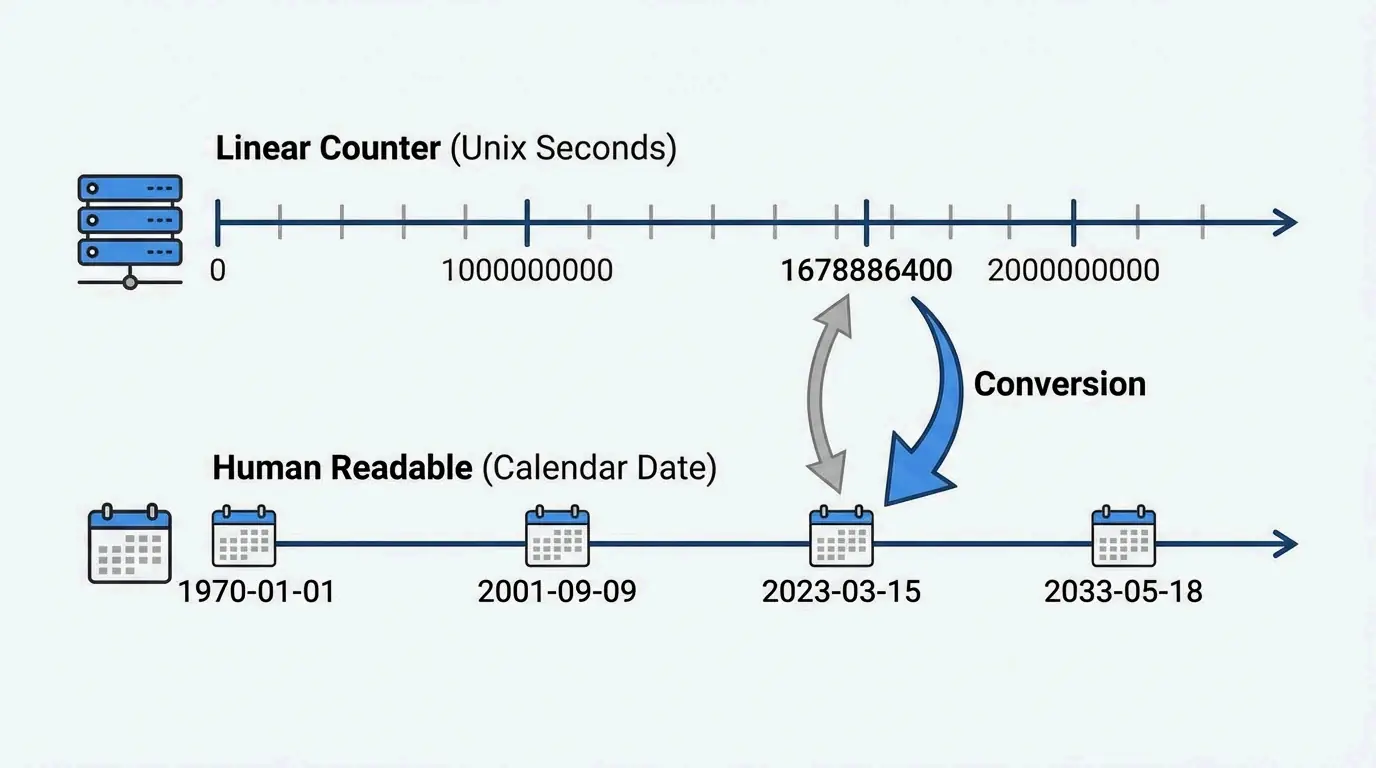

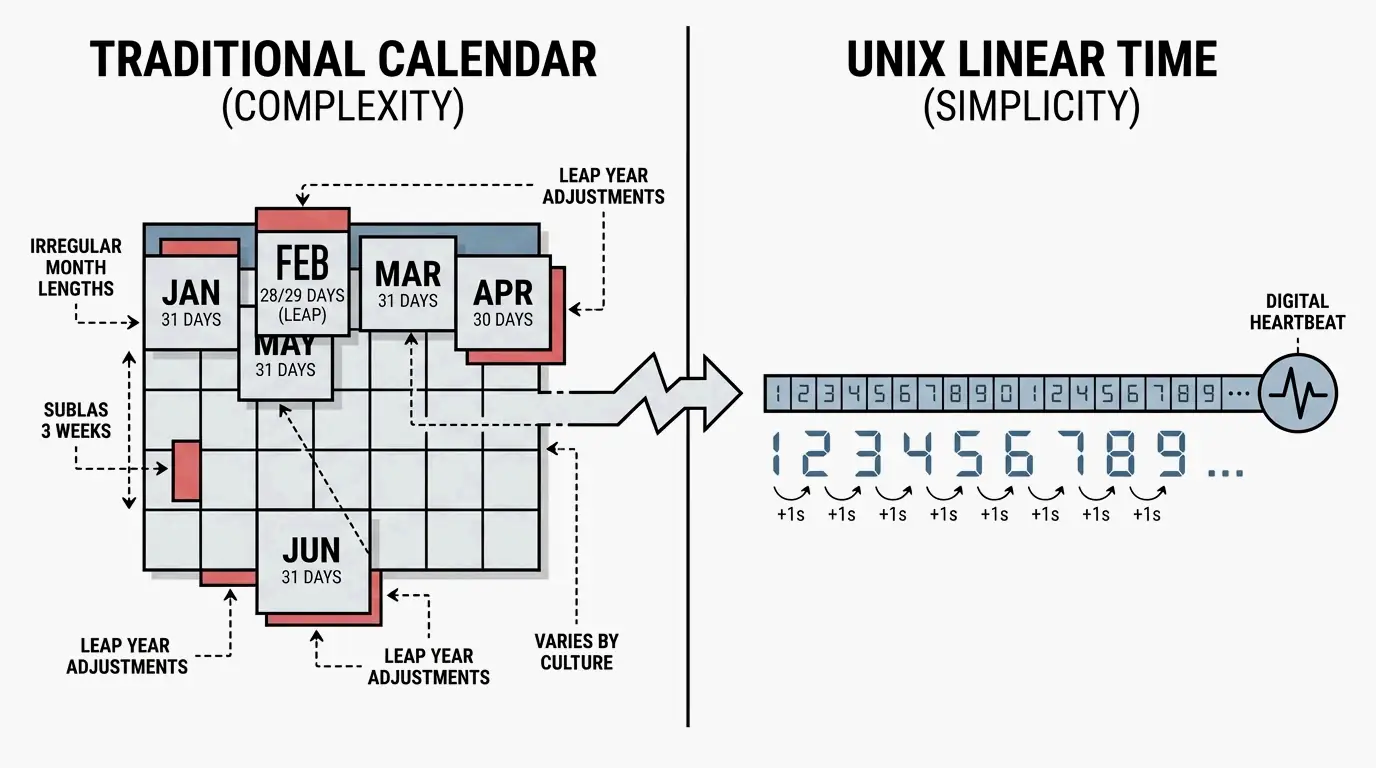

In computing, a timestamp is more of an operational measurement than a simple label. According to Merriam-Webster, a digital timestamp is an indication of the date and time recorded as part of a signal or file, marking exactly when an event occurred. While humans rely on descriptive names like “April” or “Tuesday,” digital systems treat time as a continuous linear counter.

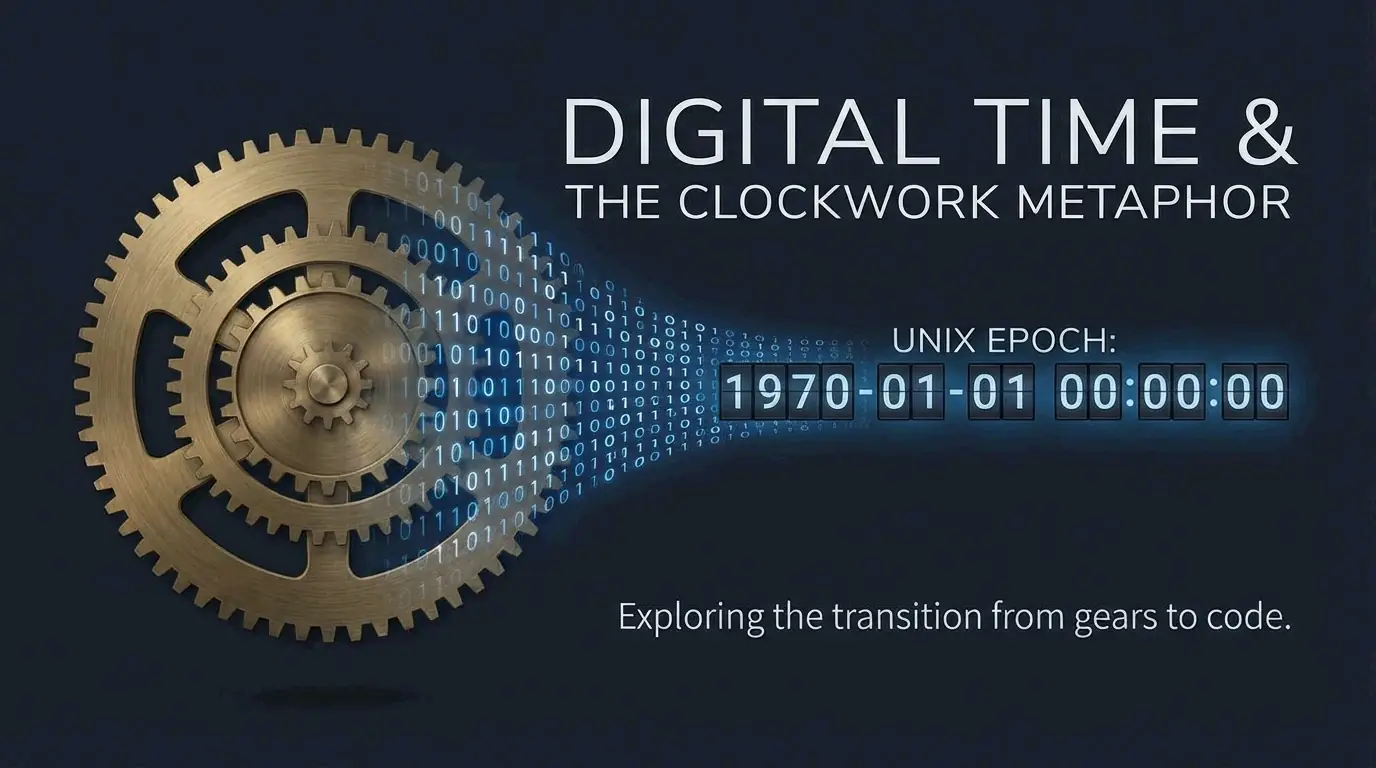

The foundation of this counting system is the “Epoch,” which acts like a universal starting line. Most modern operating systems calculate the current moment by counting the number of increments that have passed since this specific point. This process relies on hardware oscillators—usually small quartz crystals—that turn physical vibrations into digital ticks, allowing the system clock to move forward with high precision.

To keep everything consistent across different hardware, the world uses Coordinated Universal Time (UTC). As noted by Wikipedia, the Unix “billennium” reached 1,000,000,000 seconds on September 9, 2001. This milestone shows how these counters track our history using a purely numerical format.

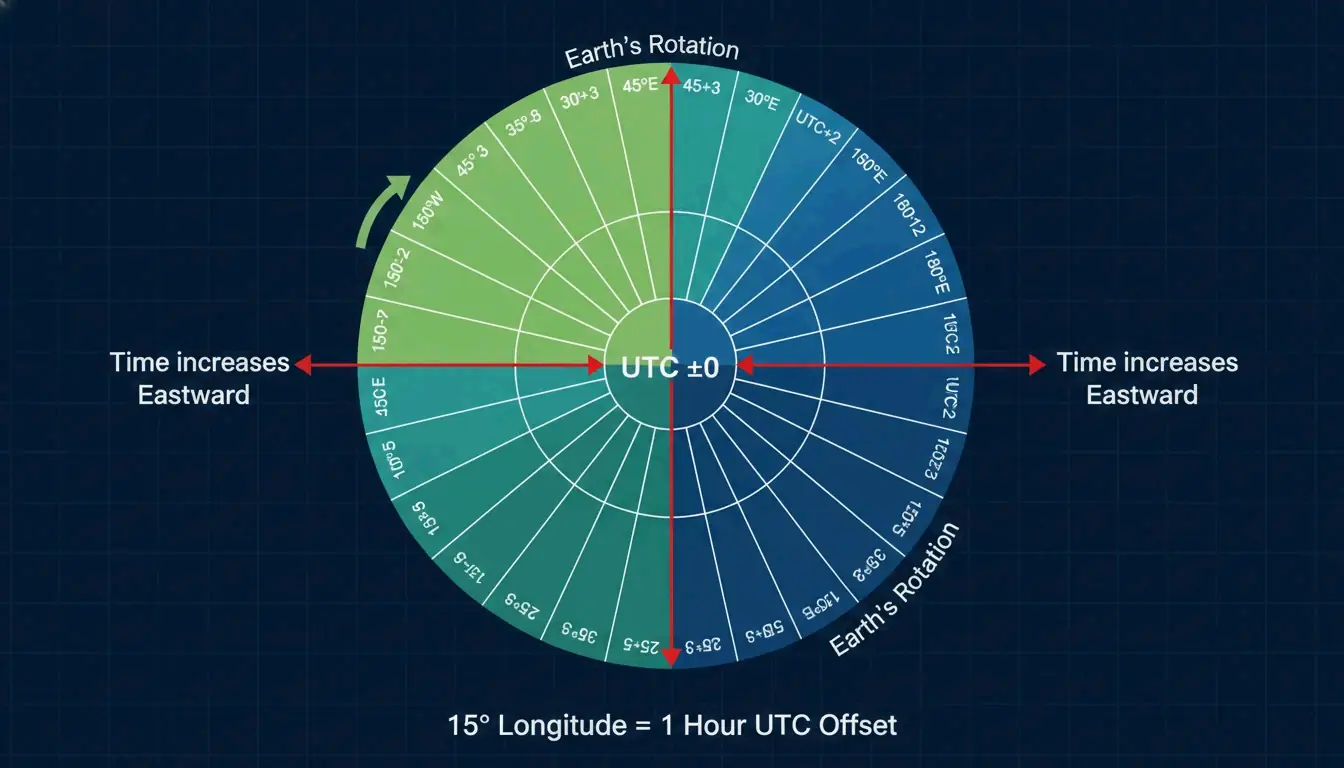

Why does the world rely on UTC (Coordinated Universal Time)?

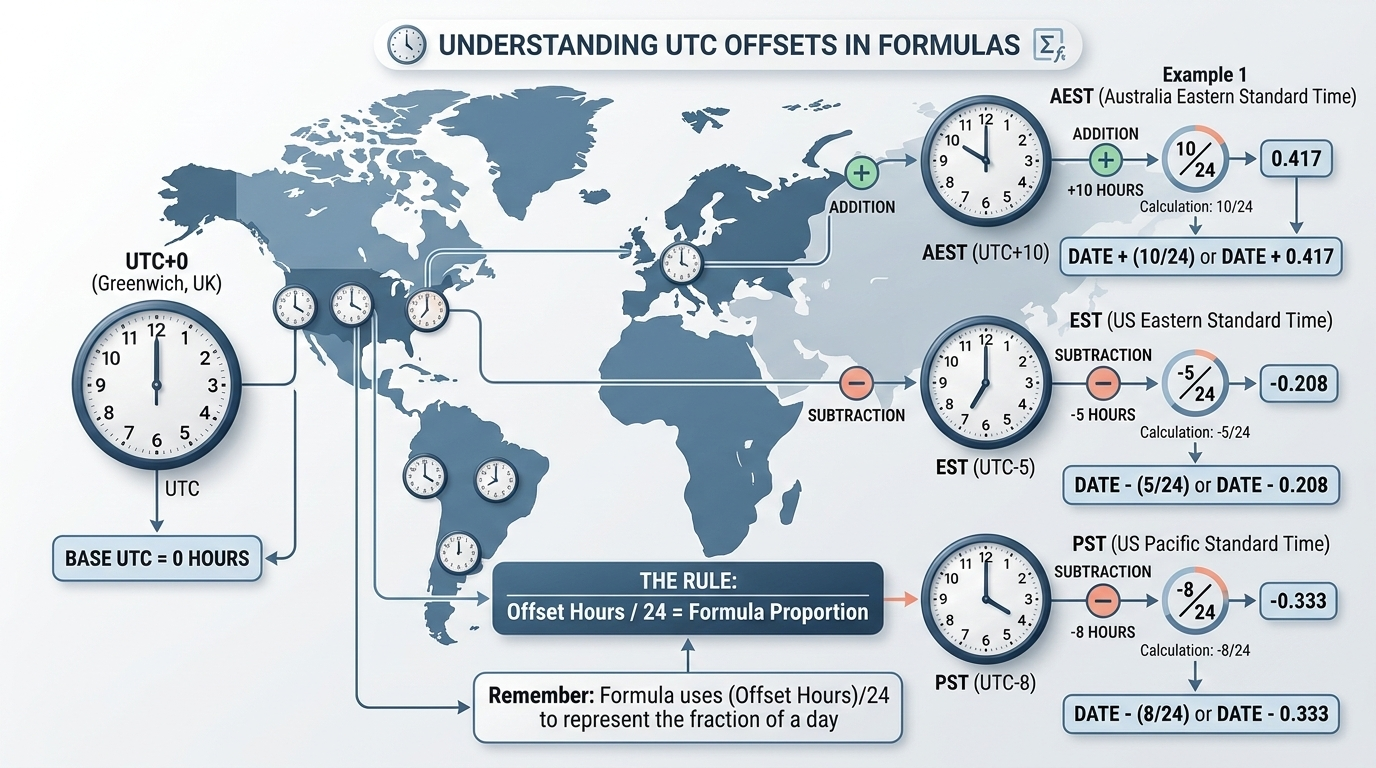

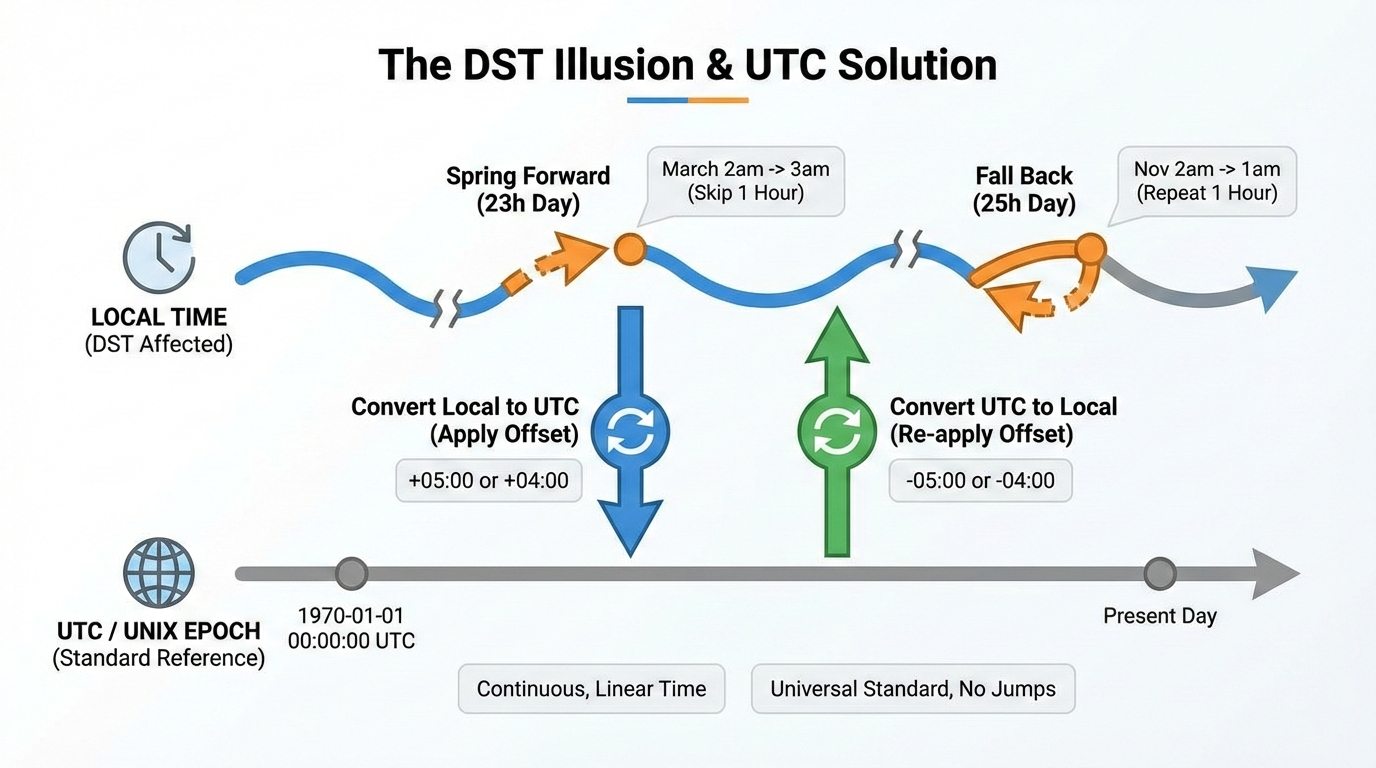

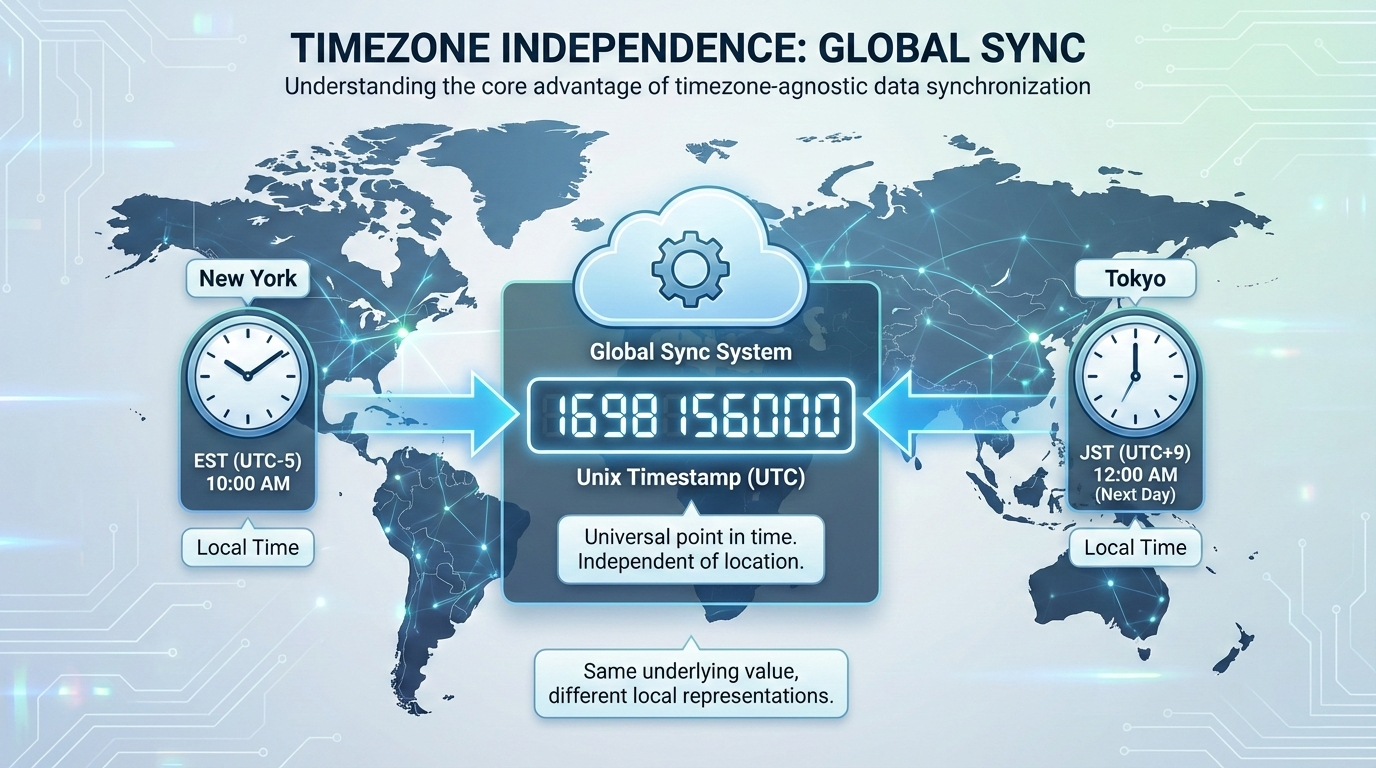

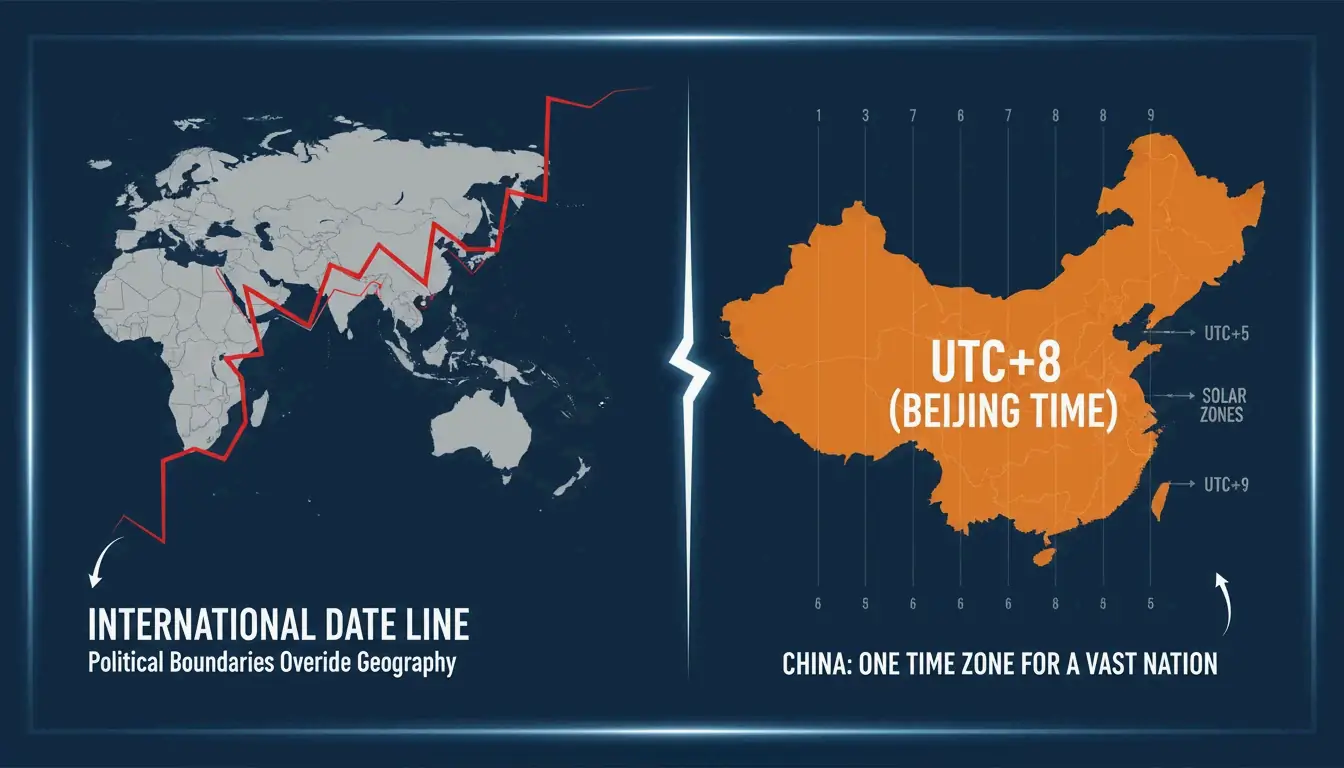

UTC provides an international time standard that stays the same no matter where you are on Earth. According to Wikipedia, UTC is an atomic time scale designed to approximate mean solar time at 0° longitude. By using UTC, computers in different time zones can synchronize perfectly. The timestamp remains a constant number based on UTC, and the “local time” you see on your screen is only calculated at the very last step for the user.

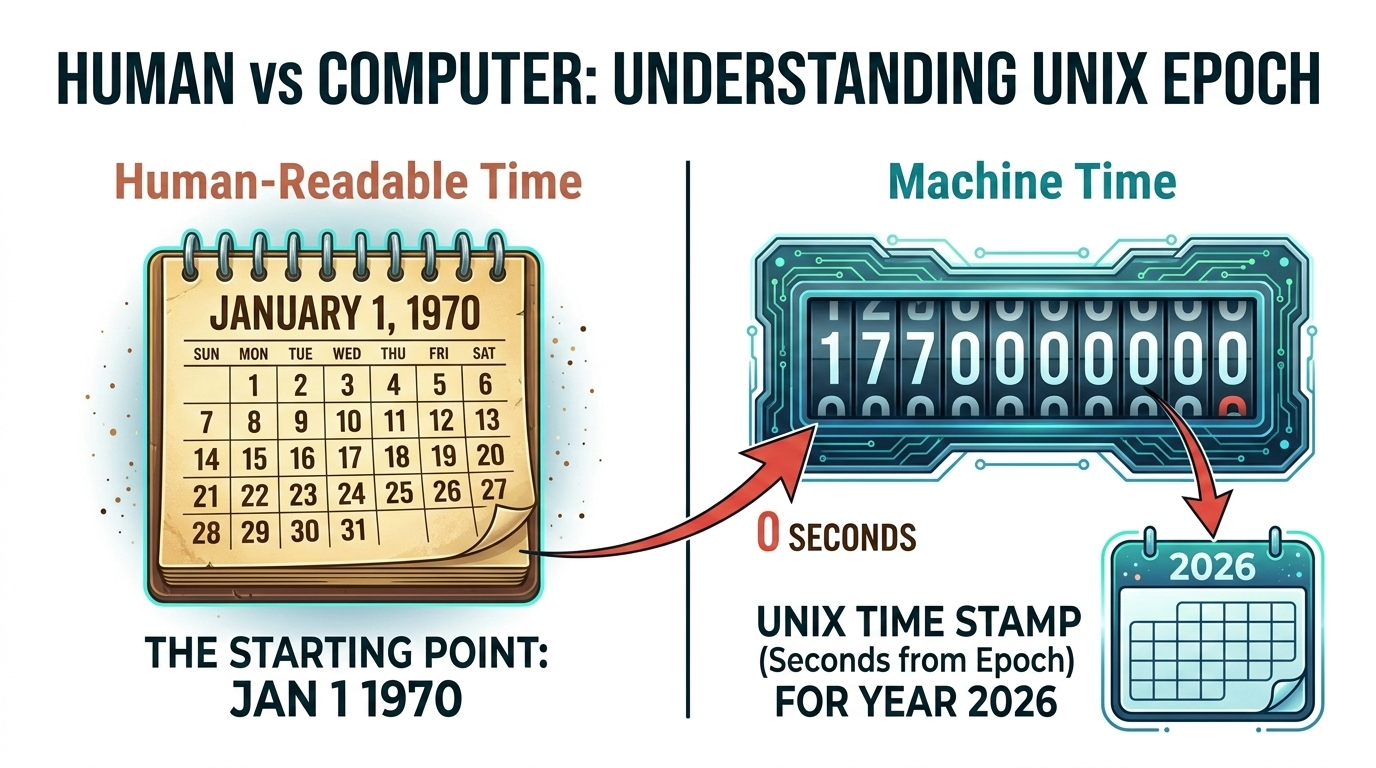

The Unix Epoch: The Standard for Global Computing

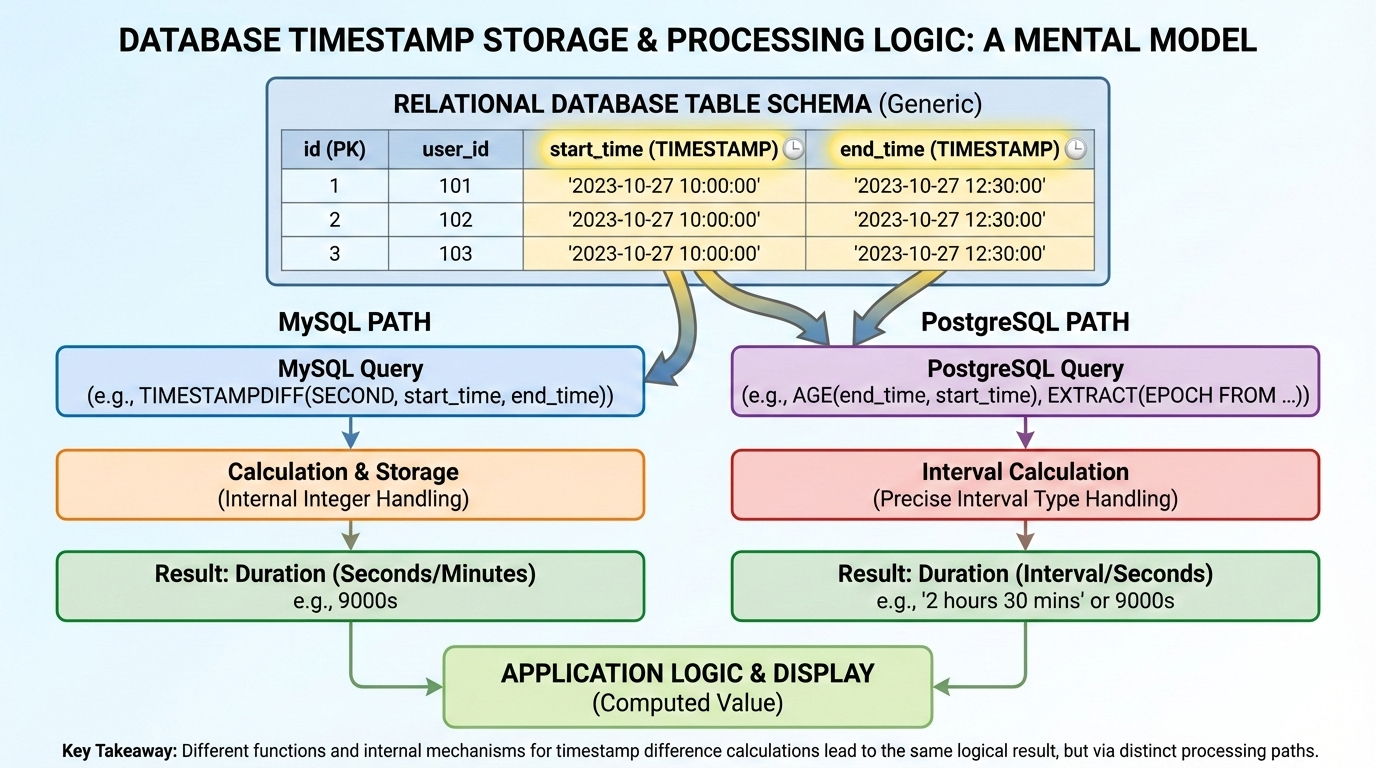

The most common way computers keep time is called Unix time. It measures time by counting the number of non-leap seconds that have passed since 00:00:00 UTC on Thursday, January 1, 1970—a moment known as the Unix Epoch. As explained by NIXX/DEV, a Unix timestamp is just a single integer with no timezone attached. This makes it unambiguous; if two systems record the same event at the exact same moment, they will produce the same number.

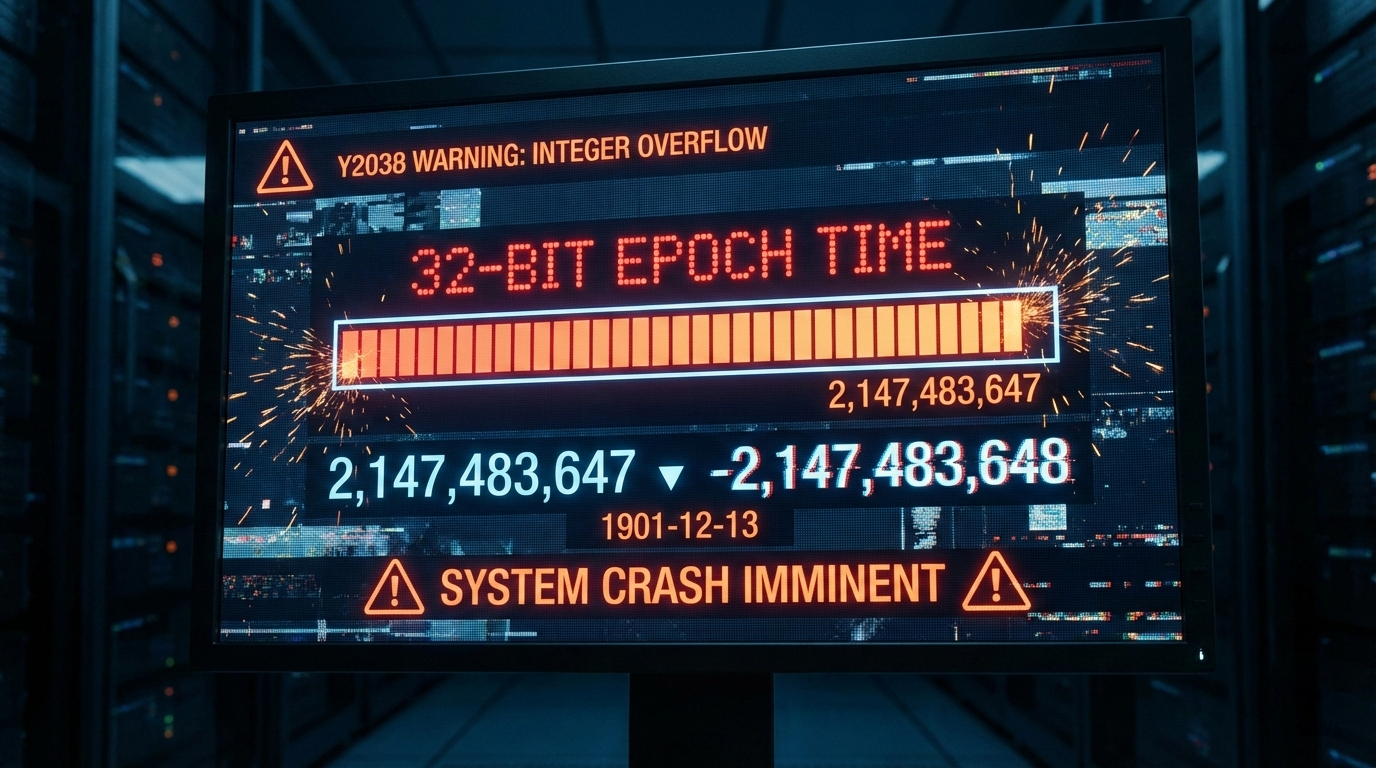

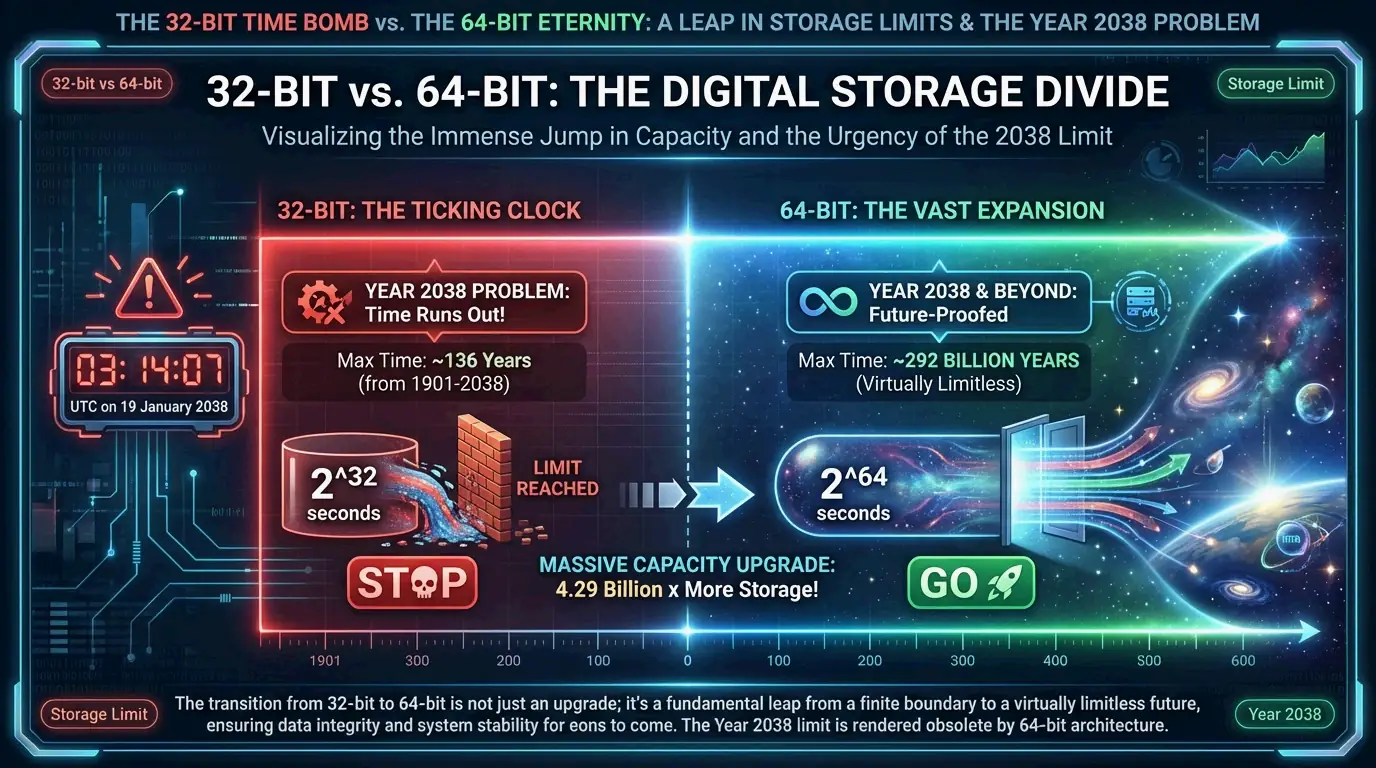

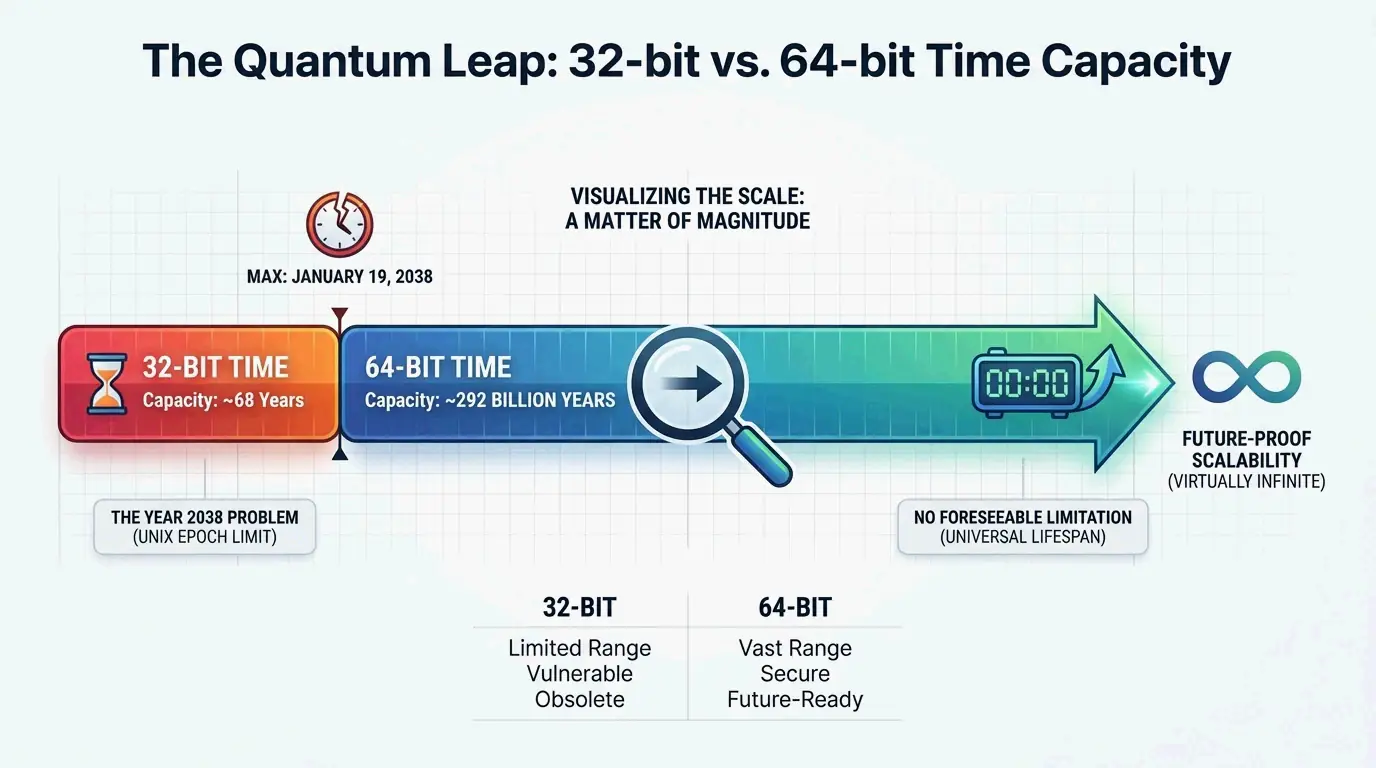

How these numbers are stored depends on the system’s architecture, specifically whether it uses 32-bit or 64-bit integers. A 32-bit signed integer can cover a range of about 136 years. However, relying on 32-bit integers has caused serious software headaches. A recent example is the Y2K22 Microsoft Exchange bug, where a 32-bit overflow caused malware-scanning updates to fail on January 1, 2022, because the date format became a number larger than 2,147,483,647.

One technical detail of Unix time is how it handles Leap Seconds. Unlike UTC, which adds leap seconds to keep up with the Earth’s slowing rotation, Unix time assumes every day has exactly 86,400 seconds. According to Wikipedia, this creates a tiny “jump” or repeat in the timestamp during a leap second event to keep the system aligned with UTC.

2026 Update: Is the ‘Year 2038 Problem’ Still a Threat?

As of April 2026, the move from 32-bit to 64-bit time storage is almost finished in mainstream tech, but some risks remain. The “Year 2038 Problem” happens because signed 32-bit integers have a limit of 2,147,483,648. On January 19, 2038, at 03:14:07 UTC, these systems will hit their limit and wrap around to a negative number, effectively making the date jump back to 1901.

Current status of the transition:

- Linux and Windows: Most modern versions have already switched to a 64-bit

time_tstructure. - The Shift in Capacity: Moving to 64-bit integers extends the range to 292 billion years—which is actually longer than the age of the universe.

- Legacy Systems: According to Wikipedia, the threat is still real for embedded systems, older IoT devices, and databases that use 32-bit fields for old or future records.

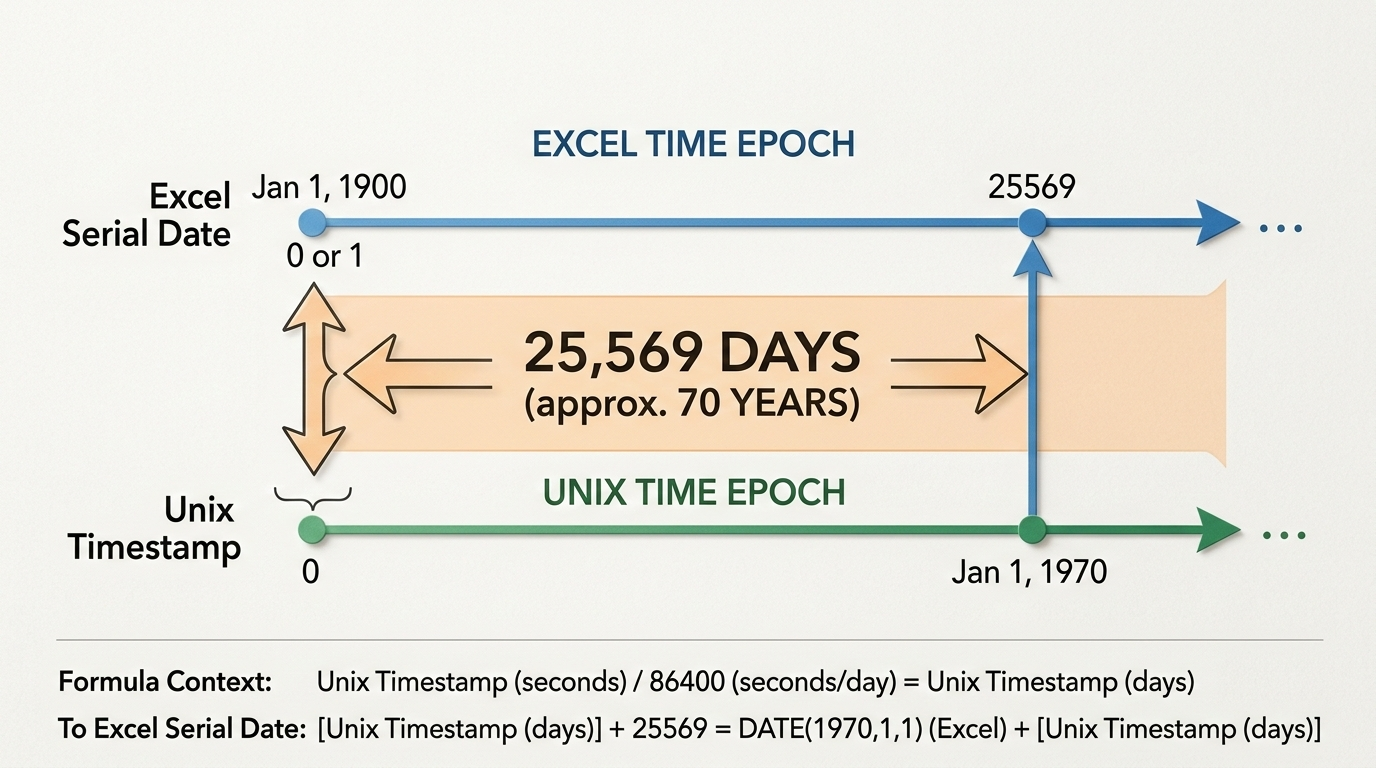

ISO 8601: Making Timestamps Human-Readable

Computers love integers, but humans need a structured string to make sense of time. ISO 8601 is the international standard for sharing date and time data. According to Wikipedia, it uses a YYYY-MM-DDTHH:MM:SSZ format. The “T” separates the date from the time, and the “Z” (short for Zulu time) shows that it is set to UTC with zero offset.

This standard is a favorite for cloud computing and APIs because it is “lexicographically sortable.” Since the biggest unit (the year) is on the left, databases can sort these strings in order of time without needing to do complex math.

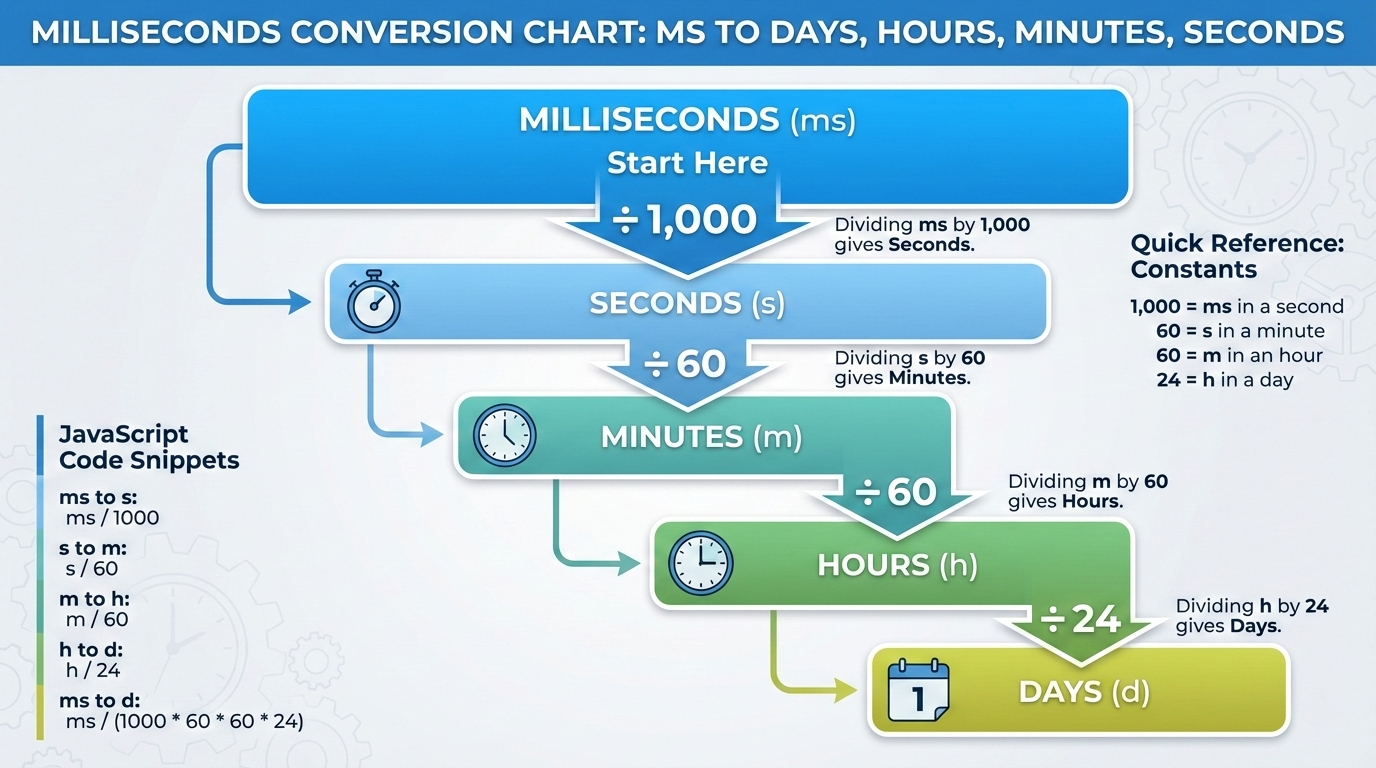

Code Snippet: Converting Timestamps in 2026

In 2026, most developers use standard libraries to turn Unix numbers into ISO 8601 strings. In JavaScript, for example, new Date().toISOString() instantly turns the current Unix timestamp (in milliseconds) into a readable string like 2026-04-22T14:30:00.000Z. According to NIXX/DEV, these tools are vital for checking API responses and reading server logs that store raw epoch values.

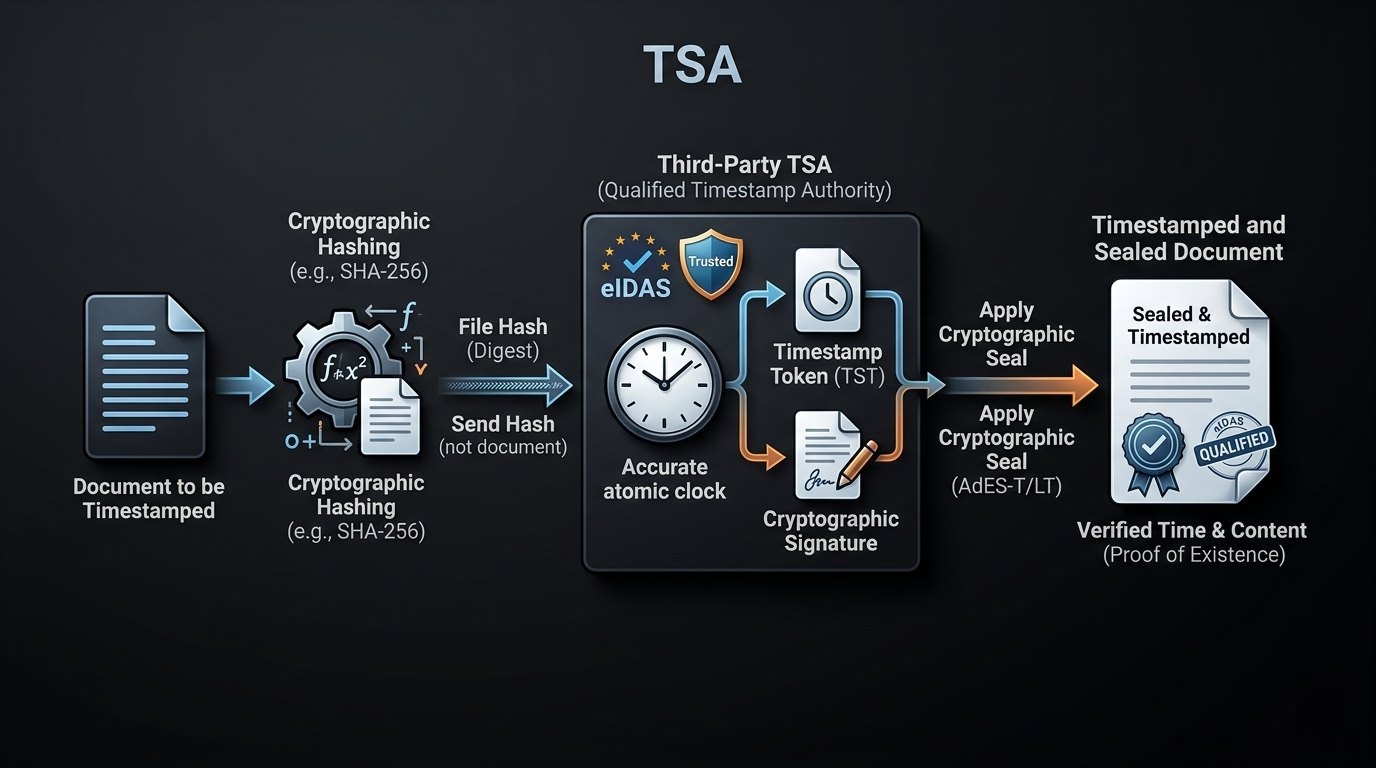

Blockchain and Security: Why Timestamps Can’t Be Faked

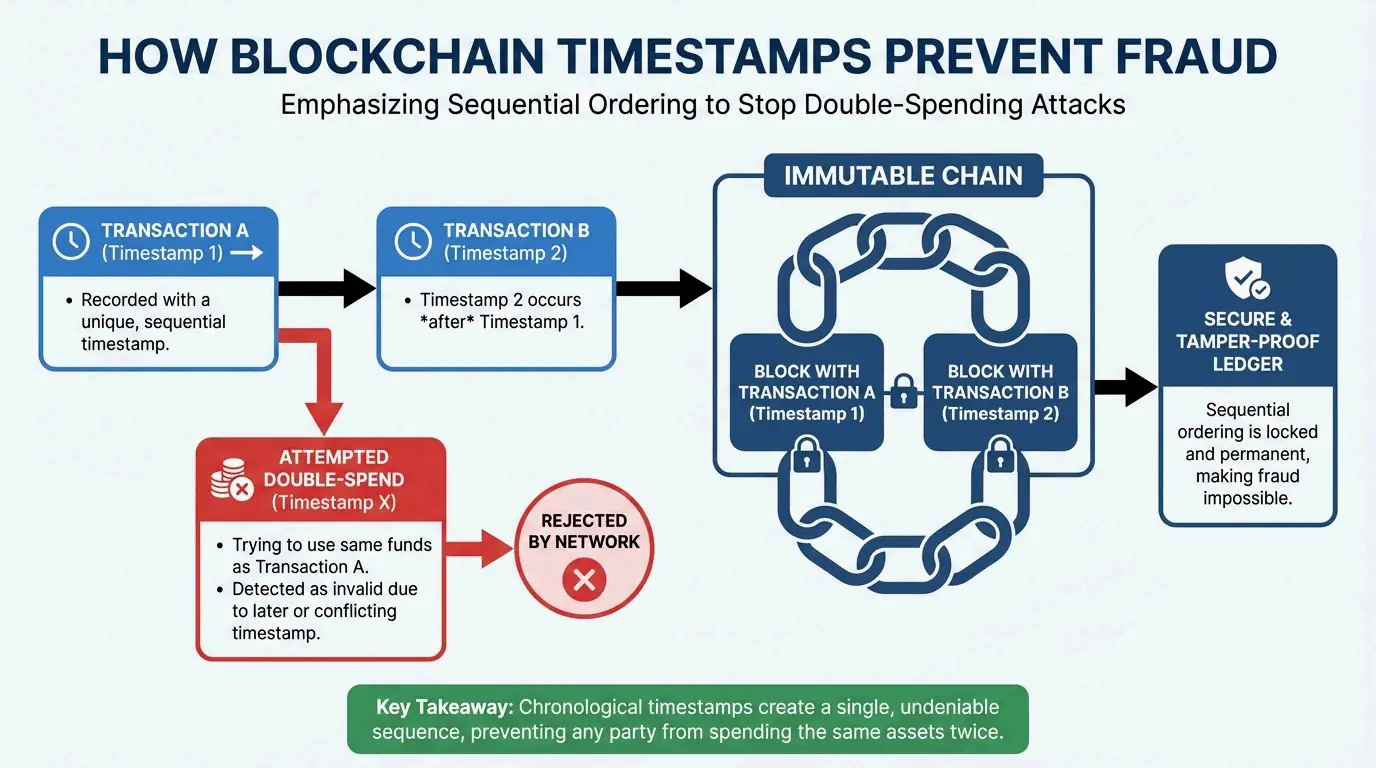

In decentralized systems like blockchain, timestamps are a primary defense against fraud. As Finst explains, they ensure all transactions are recorded in the right order, creating a history that anyone can verify but no one can change.

Satoshi Nakamoto’s design relied on this chronological order to solve the “double-spending” problem. As noted by Finst, “Satoshi Nakamoto… described that timestamps are essential for preventing problems like double spending and for establishing a reliable order of transactions.” In Bitcoin, every new block must have a timestamp later than the median of the previous 11 blocks. This keeps the blockchain moving forward and proves which transaction happened first, preventing users from spending the same digital asset twice.

Conclusion

Digital timestamps are the invisible glue of our modern world. They translate raw numbers into a synchronized reality using standards like the Unix Epoch and ISO 8601. By counting seconds from a fixed starting point, our systems maintain the precise, clear records needed for everything from global stock markets to secure blockchains.

As we get closer to 2038, finishing the transition to 64-bit integers is a top priority for keeping our infrastructure stable. Developers should check that legacy 32-bit systems are updated soon to avoid overflow errors, and use ISO 8601 strings for API data to ensure different platforms can always talk to each other clearly.

FAQ

… (Remaining text)

![Split screen: Left side shows a messy cloud of text labeled 'Standard Prompt'; Right side shows a clean, linear timeline with blocks [0-2s], [2-5s] labeled 'Timestamp Prompt'.](https://imgcdn.geowriter.ai/public/images/2026/03/img_1772584764452_746967.png?token=ea429b40a30b1741ecf07514db0496da&expires=1804120764)

![A flow diagram: [AI Prompt Timestamps] -> [NLE Timeline Markers] -> [YouTube Chapters] -> [Google Search Result Snippets].](https://imgcdn.geowriter.ai/public/images/2026/03/img_1772584732140_670480.png?token=816f81ddabcd91f5d50cf851341da27c&expires=1804120732)